Figure 1STROBE flowchart showing the identification of retracted publications across the Retraction Watch Database (RWD), PubMed and the Web of Science (WoS), and the total number of publications in the included journals.

DOI: https://doi.org/https://doi.org/10.57187/4685

Retractions serve a vital role in maintaining the integrity of scientific literature. Whether resulting from honest errors, methodological flaws, plagiarism or research misconduct, retractions are an essential mechanism for correcting the scientific record and alerting readers to invalid findings [1–5]. In biomedical research, where publications can influence clinical decision-making, public health policy and patient safety, understanding retraction patterns is particularly critical.

Over the past two decades, the number of retracted scientific publications has increased markedly [4, 6–11]. Bibliometric studies suggest that this trend may reflect both a rise in problematic publications and improved detection efforts, including heightened awareness, better editorial oversight and the use of digital tools to detect misconduct [4, 10, 11]. However, some studies have observed a possible decrease in recent years. For example, one large-scale analysis of retracted articles indexed in the Web of Science (WoS) from 2003 to 2022 found a consistent increase in retractions until 2019, followed by a drop that may be attributable to reporting delays or evolving journal practices [7].

Previous studies have examined the prevalence and causes of retractions across particular disciplines [12–21], geographic regions [22–25], reasons for retraction [26–30] and author-level characteristics, such as comparisons of male vs female authors [31–33]. However, to my knowledge, no studies have specifically aimed to evaluate differences in retraction rates across journals and medical disciplines. As a result, the extent to which retractions, and particularly those due to misconduct, affect the medical literature across specialties remains poorly understood.

A persistent challenge lies in the identification of retracted publications. Databases such as PubMed and the WoS include retraction notices based on publisher-supplied metadata and indexing tags (e.g. “retracted publication”), but these labels are inconsistently applied and sometimes delayed [34, 35]. Alternative resources, such as the Retraction Watch Database (RWD), offer more-comprehensive tracking by combining automated detection with manual curation [36]. However, no single source provides complete coverage, making multi-database approaches necessary to improve retrieval accuracy.

In this study, I aimed to quantify the number and proportion of retracted publications in a defined sample of 131 high-impact medical journals across nine clinical disciplines: anaesthesiology, dermatology, general and internal medicine, clinical neurology, obstetrics and gynaecology, oncology, paediatrics, psychiatry and radiology/nuclear medicine/medical imaging.

This study is part of a broader project on retracted publications in medicine and builds on two previous articles from my group. In the first, we compared the performance of the RWD, PubMed and the WoS Core Collection in identifying retracted publications in the same set of journals; the primary outcome was database coverage rather than retraction patterns themselves [36]. In the second, I used the resulting dataset of 878 retracted publications to examine sex disparities among authors of retracted articles and to compare these patterns with overall authorship benchmarks in biomedical journals [37].

The present study is especially relevant to medical librarians and information professionals, two groups who play a key role in ensuring access to reliable scientific information, identifying problematic publications, and educating researchers and clinicians about research integrity and responsible publishing. By shedding light on the characteristics and distribution of retracted literature in high-impact journals, my findings may inform efforts in research evaluation, collection development and user education.

This cross-sectional study builds on a previously developed dataset created to compare the performance of three major databases (RWD, PubMed, WoS Core Collection) in identifying retracted publications across 131 high-impact medical journals [36]. Using the same set of 878 unique retracted publications retrieved in that study, I sought to quantify the number and proportion of retractions and to compare retraction rates and the proportion of misconduct-related retractions across journals and disciplines. I included journals from nine clinical disciplines: anaesthesiology, dermatology, general and internal medicine, clinical neurology, obstetrics and gynaecology, oncology, paediatrics, psychiatry and radiology/nuclear medicine/medical imaging.

Clarivate’s Journal Citation Reports (JCR) have been used to select journals for inclusion. For each of the nine clinical disciplines, I identified the 15 journals with the highest 2023 Journal Impact Factor (JIF). These disciplines correspond to Web of Science/JCR subject categories and were chosen based on their clinical relevance and use in prior bibliometric research [31, 38]. The use of the JIF ensured the inclusion of widely read, high-visibility journals, where retracted publications may have the greatest potential impact. To ensure consistency across disciplines, I selected a fixed number (15) of top-ranking journals per field. This avoided variability in journal counts that would have resulted from using proportional thresholds (e.g. all journals in the first quartile).

Given that some journals are assigned to more than one JCR category, I allowed such journals to appear in more than one discipline. As a result, the final set comprised 131 unique journals rather than 135. Four journals were counted in two disciplines: J Am Acad Child Adolesc Psychiatry (paediatrics and psychiatry), J Neurol Neurosurg Psychiatry (clinical neurology and psychiatry), Neuro Oncol (clinical neurology and oncology) and Ultrasound Obstet Gynecol (obstetrics and gynaecology, and radiology). The full list of journals – including International Standard Serial Numbers (ISSN), e-ISSNs and impact factors – is provided in the supplementary file available for download at https://doi.org/10.57187/4685.

Retracted publications in the 131 selected journals have been searched using three sources: the RWD, PubMed and the WoS Core Collection. Each source was searched independently without date restrictions. All records indexed as retracted publications up to 15 December 2024 were included.

The RWD is a curated repository of retracted publications managed by Retraction Watch. Launched in 2018, the database includes entries from PubMed, Scopus, the WoS, publisher websites and institutional investigations [36]. Retractions are identified through a combination of keyword searches (e.g. “retracted”, “withdrawn”), indexing categories and manual verification. I downloaded the complete RWD dataset in CSV format and filtered it to include only retracted publications (excluding corrections and expressions of concern) from the target journals.

I used the PubMed Advanced Search Builder to identify retracted publications in each journal by filtering for the publication type “retracted publication”. Searches were conducted using journal names as well as ISSNs and e-ISSNs to capture variations in metadata. PubMed does not clearly indicate when retraction indexing began, but all retraction records available up to the search date were included.

Retractions in the WoS were identified using the Advanced Search Query Builder, specifying the document type “retracted publication” (field tag = DT). Searches were run using journal names, ISSNs and e-ISSNs. As with PubMed, the starting point of consistent retraction indexing in the WoS is not clearly documented.

Searches in the RWD, PubMed and the WoS have been conducted on 15 December 2024. Retracted publications identified from the three databases were consolidated. Records were matched using the PubMed ID (PMID) when available. In the absence of a PMID, matching was performed manually using full citation information (title, authors, journal, year, volume and issue). Manual verification was necessary to account for formatting inconsistencies (e.g. title case, punctuation, extra tags like “retracted article”). The final dataset represented the composite retraction count, used as the numerator for calculating retraction proportions. For each journal, I extracted the total number of publications indexed in PubMed to 15 December 2024. This served as the denominator for calculating retraction proportions.

In the original development of this dataset, searches and screening of records from the three databases were performed independently by two investigators (PS and MS), with discrepancies resolved by consensus. Details of this manual verification and consensus procedure are fully described in the previous database-comparison publication [36]. The present study uses this dataset and did not require additional screening.

To identify misconduct-related retractions, I used the “reason for retraction” field in the RWD. Retractions were classified as misconduct-related if they included at least one of the following criteria: fabrication or falsification of data, images, or results; plagiarism (of text, data, images or full articles); manipulation of results or images; authorship fraud (e.g. forged authorship or lack of approval from authors); fake peer review; salami slicing; use of paper mills; ethical violations such as lack of informed consent or IRB approval; or sabotage of materials. A complete list of these criteria is provided in the supplementary material. This classification approach has been used in several previous studies examining the reasons for retraction [29, 31].

The country of affiliation was obtained from the “countries” field in the RWD, which is extracted and assigned by Retraction Watch as part of their curation process. Retractions coded with more than one country in the RWD were classified as “multiple countries”.

I acknowledge the potential for misclassification in identifying retracted publications. The risk of false negatives (i.e. missed retractions) has been documented in previous studies [35, 39–41]. To minimise this, I combined data from three major sources (RWD, PubMed, WoS), each offering complementary strengths. The RWD’s exclusive focus on retractions and its multi-source, manually curated process likely improved coverage.

False positives (i.e. articles incorrectly labelled as retracted) are also possible [35, 42]. To assess classification accuracy, I randomly selected 33 records from each database (99 in total) and manually verified their status, following an approach adapted from Schneider et al. [42]. Only two were not true retractions, suggesting a low false-positive rate. These findings support the reliability of the retraction classification in the databases used, though accuracy may vary in lower-impact journals.

I summarised the data using descriptive statistics. For each journal and discipline, I calculated retraction rates (per 1000 publications), misconduct-related retraction rates and the proportion of misconduct among all retractions.

I examined the distribution of publication years, retraction years and the delay in years between publication and retraction. Additional variables were summarised, including the number of authors per retracted article, the type of article and the countries of affiliation of the authors. I also identified all authors listed on retracted publications and computed the total number of unique authors.

All analyses were conducted using complete-case analysis; missing values were not imputed. The number of observations used for each calculation is reported in the corresponding tables and figure legends.

This study adheres to the STROBE (Strengthening the Reporting of Observational Studies in Epidemiology) guidelines for cross-sectional studies.

No formal study protocol was prospectively registered in a public repository. The analysis plan was defined a priori during the development of the original methodological study [36] and is fully described in the Methods section. There were no deviations from this plan in the present analysis.

All analyses were conducted using Stata version 15.1 (StataCorp LLC, College Station, TX, USA). No custom software libraries, frameworks or packages beyond the standard Stata installation were used. The aggregated data required to reproduce the main results are available in the supplementary material, and additional clarification on the analysis steps can be provided by the corresponding author on reasonable request.

This study did not involve human participants or personal health-related data and therefore did not require ethics approval under Swiss legislation.

Figure 1 shows the STROBE flowchart illustrating the identification of retracted publications across the RWD, PubMed and the WoS, and the total number of publications indexed in the included journals. A total of 878 retracted publications were identified among 422,827 publications across 131 high-impact journals spanning nine medical disciplines, corresponding to a retraction rate of 2.08 per 1000 publications. Of these, 542 retractions (66.8%) were attributed to misconduct, based on the 811 articles with available data.

Figure 1STROBE flowchart showing the identification of retracted publications across the Retraction Watch Database (RWD), PubMed and the Web of Science (WoS), and the total number of publications in the included journals.

Figure 2 presents the distribution of publication and retraction years, and figure 3 shows the delay (in years) between publication and retraction, based on the 811 retracted publications with available data. Articles were published between 1965 and 2024 (median: 2009 [2001–2017]) and retracted between 1975 and 2024 (median: 2017 [2011–2021). The delay between publication and retraction ranged from 0 to 54 years (median: 4, [1–10]).

Figure 2Publication and retraction years of 878 retracted publications from 131 high-impact journals across nine medical disciplines (n = 811 due to missing data).

Figure 3Delay in years between publication and retraction for 878 retracted publications from 131 high-impact journals across nine medical disciplines (n = 811 due to missing data).

The number of authors per retracted publication ranged from 1 to 36 (n = 811). A total of 71 articles (8.8%) were authored by one person, 132 (16.3%) by two, 154 (19.0%) by three, 96 (11.8%) by four, 92 (11.3%) by five and 266 (32.8%) by more than five authors. In total, 2864 unique authors were identified across all retracted publications. Authors with five or more retracted publications are listed in the supplementary material. Notably, the eleven authors with at least 20 retractions were all anaesthetists, with six affiliated to institutions in Japan and five in Germany.

Table 1 presents the countries of affiliation of the authors (n = 811). The top five countries were Japan (249 retracted publications, 30.7%), the United States (169, 20.8%), Germany (103, 12.7%), China (50, 6.2%) and the United Kingdom (29, 3.6%). For 91 publications (11.2%), authors had affiliations to more than one country.

Table 1Countries of affiliation of the authors of 878 retracted publications from 131 high-impact journals across nine medical disciplines (n = 811 due to missing data).

| Country of affiliation | Number of retracted publications | % |

| Japan | 249 | 30.70% |

| United States | 169 | 20.84% |

| Germany | 103 | 12.70% |

| Multiple countries | 91 | 11.22% |

| China | 50 | 6.17% |

| United Kingdom | 29 | 3.58% |

| Egypt | 20 | 2.47% |

| Canada | 12 | 1.48% |

| India | 10 | 1.23% |

| Italy | 9 | 1.11% |

| Turkey | 9 | 1.11% |

| Unknown | 9 | 1.11% |

| France | 7 | 0.86% |

| South Korea | 6 | 0.74% |

| Australia | 5 | 0.62% |

| Norway | 4 | 0.49% |

| Spain | 4 | 0.49% |

| Sweden | 4 | 0.49% |

| Austria | 3 | 0.37% |

| Iran | 3 | 0.37% |

| Switzerland | 3 | 0.37% |

| Brazil | 2 | 0.25% |

| Israel | 2 | 0.25% |

| Netherlands | 2 | 0.25% |

| Colombia | 1 | 0.12% |

| Hong Kong | 1 | 0.12% |

| Mexico | 1 | 0.12% |

| Qatar | 1 | 0.12% |

| South Africa | 1 | 0.12% |

| Taiwan | 1 | 0.12% |

The article type was available for 874 retracted publications. Among them, 576 (65.9%) were research articles, 61 (7.0%) letters or comments, 31 (3.6%) guidelines, 28 (3.2%) reviews, 24 (2.8%) case reports, 17 (2.0%) conference abstracts and 2 (0.2%) other types. Additionally, 135 publications (15.5%) were associated with more than one article type.

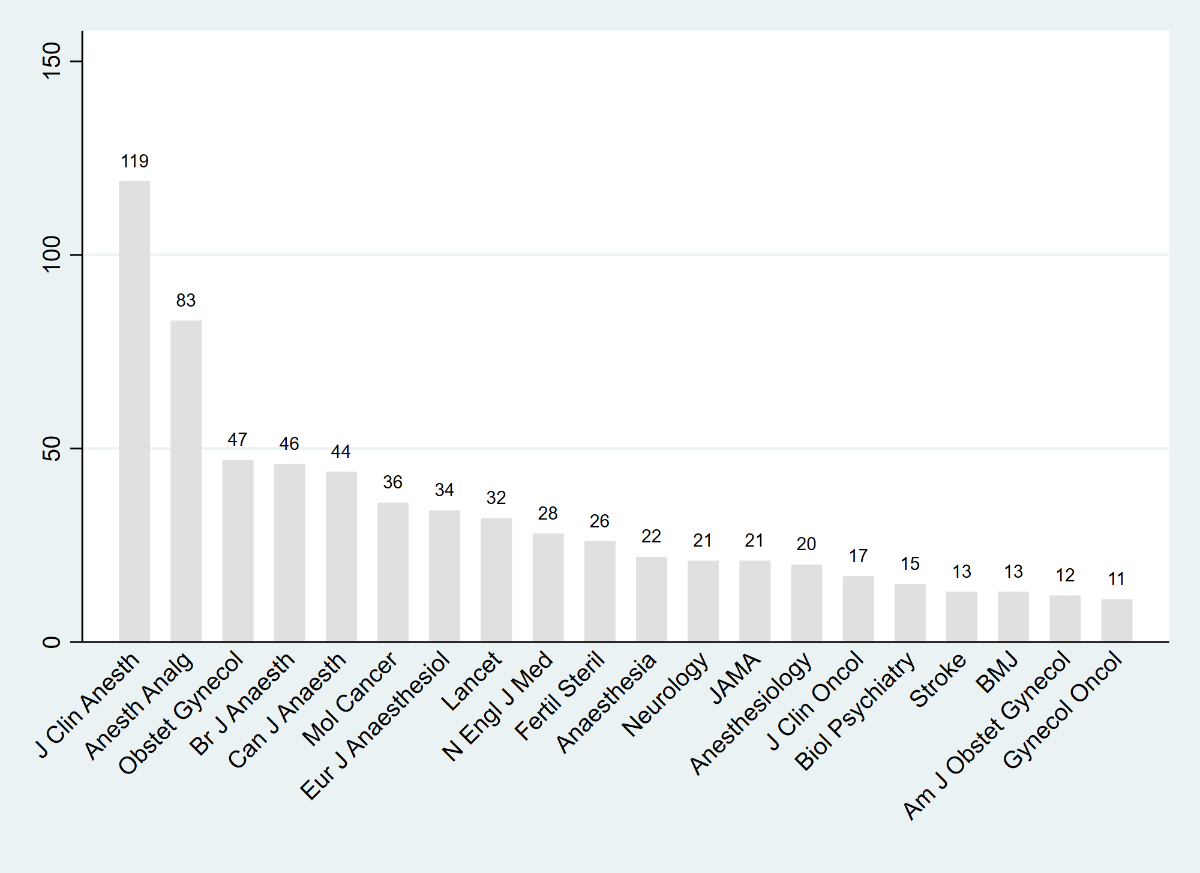

The supplementary material presents, for each journal, the total number of publications, the number of retracted publications (total and for misconduct) and the corresponding retraction rates. Figure 4 displays the 20 journals with the highest number of retracted publications and figure 5 shows the top 20 journals ranked by retraction rate per 1000 publications. The supplementary material also shows the 20 journals with the highest number and highest proportion of misconduct-related retractions. The number of retracted publications per journal ranged from 0 to 119. Specifically, 47 journals had no retractions, 22 had one, 13 had two and the remaining journals had more than two. Among the five journals with the highest number of retractions, four were in anaesthesiology: J Clin Anesth (n = 119), Anesth Analg (n = 83), Obstet Gynecol (n = 47), Br J Anaesth (n = 46) and Can J Anaesth (n = 44). These results have already been reported in our previous study [36]. A similar pattern was observed for misconduct-related retractions, with four of the five journals again in anaesthesiology: J Clin Anesth (n = 117), Anesth Analg (n = 72), Br J Anaesth (n = 44), Can J Anaesth (n = 42) and Mol Cancer (n = 27). In 10 journals, all retractions were due to misconduct. Among the five journals with the highest retraction rates per 1000 publications, four were in anaesthesiology: J Clin Anesth (249/1000), Eur J Anaesthesiol (170/1000), Can J Anaesth (91/1000), Anesth Analg (72/1000) and Lancet Oncol (37/1000).

Figure 4The twenty journals with the highest number of retracted publications, based on 878 retracted publications from 131 high-impact journals across nine medical disciplines.

Figure 5The twenty journals with the highest retraction rate per 1000 publications, based on 878 retracted publications from 131 high-impact journals across nine medical disciplines.

Finally, table 2 presents retraction data by discipline, including total publications, total retractions, retractions for misconduct, retraction rates per 1000 publications (total and for misconduct) and the proportion of misconduct-related retractions among all retractions. The three disciplines with the highest number of retractions were anaesthesiology (382, of which 335 were for misconduct), internal medicine (125 and 45) and gynaecology/obstetrics (116 and 48). The highest retraction rates per 1000 publications were observed in anaesthesiology (7.0), gynaecology/obstetrics (2.4) and oncology (2.2). The highest proportions of misconduct-related retractions among all retractions were seen in anaesthesiology (88.9%), oncology (59.5%) and psychiatry (57.5%).

Table 2Number of publications, number of retracted publications (total and for misconduct), retraction rates (per 1000 publications), misconduct-related retraction rates (per 1000 publications and as a percentage of retractions), by discipline, for 878 retracted publications from 131 high-impact journals across nine medical disciplines (disciplines listed in alphabetical order).

| Discipline | Number of publications | Number of retracted publicationsa | Number of retracted publications with reason availableb | Number of retracted publications for misconduct | Retraction rate (per 1000 publications)c | Misconduct-related retraction rate (per 1000 publications)d | Misconduct-related retraction rate (%)e |

| Anaesthesiology | 54,321 | 382 | 377 | 335 | 7.03 | 6.17 | 88.86% |

| Clinical neurology | 44,916 | 62 | 58 | 22 | 1.38 | 0.49 | 37.93% |

| Dermatology | 29,380 | 18 | 16 | 3 | 0.61 | 0.10 | 18.75% |

| Medicine, general & internal | 121,941 | 125 | 116 | 45 | 1.03 | 0.37 | 38.79% |

| Obstetrics & gynaecology | 47,499 | 116 | 87 | 48 | 2.44 | 1.01 | 55.17% |

| Oncology | 42,518 | 92 | 84 | 50 | 2.16 | 1.18 | 59.52% |

| Paediatrics | 35,646 | 20 | 19 | 9 | 0.56 | 0.25 | 47.37% |

| Psychiatry | 33,396 | 44 | 40 | 23 | 1.32 | 0.69 | 57.50% |

| Radiology, nuclear medicine & medical imaging | 41,746 | 33 | 27 | 13 | 0.79 | 0.31 | 48.15% |

a The total number of retracted publications sums to 892 rather than 878, because four journals were assigned to two disciplines: J Am Acad Child Adolesc Psychiatry (PAEDIATRICS and PSYCHIATRY), J Neurol Neurosurg Psychiatry (CLINICAL NEUROLOGY and PSYCHIATRY), Neuro Oncol (CLINICAL NEUROLOGY and ONCOLOGY) and Ultrasound Obstet Gynecol (OBSTETRICS & GYNAECOLOGY and RADIOLOGY, NUCLEAR MEDICINE & MEDICAL IMAGING).

b The reason for retraction was available for 811 of the 878 retracted publications. The total number of retractions with reason available sums to 824 rather than 811, because four journals were assigned to two disciplines (see note 1 above).

c Retraction rate (per 1000 publications) = (number of retracted publications ÷ number of publications) × 1000.

d Misconduct-related retraction rate (per 1000 publications) = (number of misconduct-related retractions ÷ number of publications) × 1000.

e Misconduct-related retraction rate (%) = (number of misconduct-related retractions ÷ number of retracted publications with reason for retraction available) × 100.

This cross-sectional analysis of 878 retracted publications from 131 high-impact journals across nine medical disciplines revealed notable variation in both the number and proportion of retractions by journal and discipline. Anaesthesiology accounted for the highest number of retracted publications overall and the highest rate of retractions per 1000 publications. The majority of retractions (67%) was attributable to research misconduct, with particularly high proportions observed in anaesthesiology, oncology and psychiatry. My study also highlighted differences in publication-retraction delay, article type, authorship patterns and country of affiliation, offering a comprehensive overview of retraction characteristics in high-impact medical literature.

My findings are consistent with previous bibliometric studies that have documented heterogeneity in retraction rates across scientific journals [7–9, 43]. Prior research has shown that disciplines with intense publication pressure or notable misconduct cases, such as oncology and biology, and more broadly biomedicine, tend to have elevated retraction rates [7, 9, 44]. However, anaesthesiology was not specifically examined in these studies. The disproportionate number of retracted publications in anaesthesiology may reflect a combination of past scandals, common research designs in the field and broader systemic issues such as editorial or institutional oversight [45, 46]. Some of the highest-profile retraction cases in biomedical science have occurred in this field, including those involving Hironobu Ueshima in Japan [47, 48] and Joachim Boldt in Germany [49–53] – their work accounted for more than 200 retractions in my study. These cases may have inflated overall retraction counts and prompted increased retrospective scrutiny of anaesthesiology publications. Furthermore, research in anaesthesiology often involves single-operator trials or studies with small sample sizes and limited external oversight, potentially increasing vulnerability to undetected errors or misconduct. However, the observed retraction patterns may also reflect improved detection mechanisms and a stronger corrective culture in anaesthesiology, rather than a higher incidence of misconduct per se.

The five countries with the most retracted publications in my study were Japan, the United States, Germany, China and the United Kingdom. This partly matches our previous study on misconduct-related retractions from 1996 to 2023, where the top countries were China, the United States, India, Japan and Germany. Four countries appear in both lists, while India ranked eighth in the current analysis. These differences likely reflect variations in methods: the earlier study focused only on misconduct, included all journals and covered a fixed time frame [29]. A study by Fang et al., which investigated retractions for fraud or suspected fraud using PubMed data, similarly found that the United States, Germany, Japan, China and the United Kingdom accounted for more than three-quarters of such retractions [8].

The retraction rate observed in the current study (2.1 per 1000 publications) was higher than those reported in our previous research. In our misconduct-focused country-level analysis, the rate was 0.5 per 1000, i.e. approximately four times lower [29]. This discrepancy may be due to the scope of the current study, which includes all reasons for retraction, and the focus on high-impact journals, where retraction rates tend to be higher [4, 43, 54]. This may reflect both increased scrutiny for articles published in high-impact journals and higher pressure to publish in such journals. In another study by my team examining retractions in primary care journals (2000–2022), we found a retraction rate of 0.1 per 1000 for the 18 primary care journals with a JCR impact factor, compared to 0.3 per 1000 for 117 general internal medicine journals with an impact factor >2 and 0.6 per 1000 across all PubMed-indexed articles [14].

Moreover, I observed that the retraction rate tended to increase in more recent publication years. This trend has also been reported in several other bibliometric studies, which suggests that retraction rates have risen over time due to improved detection mechanisms, growing editorial awareness and the increasing availability of digital tools for identifying scientific misconduct [4, 6–11]. These findings reinforce the idea that retractions are not only more likely to be issued today than in the past, but also more promptly indexed and tracked by databases and watchdog initiatives.

Finally, I found that approximately two-thirds of the retracted publications in the dataset were due to misconduct (67%). This aligns with the findings by Fang et al., who reported a similar proportion of misconduct-related retractions (67%) among retractions indexed in PubMed [4, 8].

These results have several implications. First, the substantial variation in retraction rates by journal and discipline suggests that research integrity risks are not evenly distributed across the biomedical field. Journals and institutions in high-risk disciplines may benefit from additional screening mechanisms, such as data audits or authorship verification procedures. Second, the predominance of misconduct-related retractions underscores the need for ongoing education in responsible conduct of research and for stronger institutional oversight. For researchers, my findings highlight the importance of critically appraising the reliability of published evidence, particularly in fields with higher retraction rates. Future research should explore the downstream impact of retracted publications on clinical practice, citation patterns and public trust.

This study has several limitations. Although we used three major databases and conducted manual verification to reduce classification errors, it is possible that some retracted publications were missed, particularly if they were not indexed or consistently labelled. However, inclusion of the RWD, which is exclusively focused on tracking retractions from multiple sources, likely minimised this risk of false negatives. I did not conduct a systematic evaluation of false positives in the present analysis. However, I randomly sampled 99 records across the three databases and found only two that were incorrectly labelled as retracted publications, suggesting a low false-positive rate. In addition, the focus on high-impact journals reduces the generalisability of my findings to lower-impact or non-indexed journals.

In this study, I quantified the number and proportion of retracted publications across 131 high-impact medical journals and identified clear discipline-related patterns in retraction rates and misconduct-related causes. Anaesthesiology emerged as the field with the highest retraction burden, both in volume and in proportion. These findings underscore the need for continued vigilance in publication practices, greater transparency in the retraction process and further investigation into discipline-specific vulnerabilities. As the scientific community strives to improve research integrity, systematic monitoring of retractions will remain a critical tool for accountability and quality assurance.

The data associated with this article are available as supplementary material and will remain accessible via the journal’s website. Data are openly available without restriction, and further clarification on variable definitions can be obtained from the corresponding author.

I thank Melissa Sebo for assistance with project administration.

This study received no funding.

The author has completed and submitted the International Committee of Medical Journal Editors form for disclosure of potential conflicts of interest. No potential conflict of interest related to the content of this manuscript was disclosed.

1. Steen RG. Retractions in the scientific literature: do authors deliberately commit research fraud? J Med Ethics. 2011 Feb;37(2):113–7.

2. Wager E, Williams P. Why and how do journals retract articles? An analysis of Medline retractions 1988-2008. J Med Ethics. 2011 Sep;37(9):567–70.

3. Fanelli D. How many scientists fabricate and falsify research? A systematic review and meta-analysis of survey data. PLoS One. 2009 May;4(5):e5738.

4. Marcus A, Oransky I. What studies of retractions tell us. J Microbiol Biol Educ. 2014 Dec;15(2):151–4.

5. Fanelli D. Why growing retractions are (mostly) a good sign. PLoS Med. 2013 Dec;10(12):e1001563.

6. Van Noorden R. Science publishing: the trouble with retractions. Nature. 2011 Oct;478(7367):26–8.

7. Koo M, Lin SC. Retracted articles in scientific literature: A bibliometric analysis from 2003 to 2022 using the Web of Science. Heliyon. 2024 Sep;10(20):e38620.

8. Fang FC, Steen RG, Casadevall A. Misconduct accounts for the majority of retracted scientific publications. Proc Natl Acad Sci USA. 2012 Oct;109(42):17028–33.

9. Grieneisen ML, Zhang M. A comprehensive survey of retracted articles from the scholarly literature. PLoS One. 2012;7(10):e44118.

10. Steen RG, Casadevall A, Fang FC. Why has the number of scientific retractions increased? PLoS One. 2013 Jul;8(7):e68397.

11. Brainard J. Rethinking retractions. Science. 2018 Oct;362(6413):390–3.

12. Reith BM, Brand HS. Evaluation of retracted publications related to oral health: a scoping review. Br Dent J. 2025 Mar;•••:

13. Song F, Wu B, Wei G, Cheng S, Wei L, Xiong W, et al. A systematic analysis of temporal trends, characteristics, and citations of retracted stem cell publications. BMC Med. 2025 Feb;23(1):131.

14. Sebo P. Retractions in primary care journals (2000–2022). Scientometrics. 2023;128(12):6739–60.

15. Choudhry HS, Anur SM, Choudhry HS, Kokush EM, Patel AM, Fang CH. Retracted Publications in Otolaryngology-Head and Neck Surgery: What Mistakes Are Being Made? OTO Open. 2024 Jun;8(2):e157.

16. Call CM, Michalakes PC, Lachance AD, Zink TM, McGrory BJ. A Systematic Review of Retracted Publications in Clinical Orthopaedic Research. J Arthroplasty. 2024 Dec;39(12):3107–13.

17. Ferraro MC, Moore RA, de C Williams AC, Fisher E, Stewart G, Ferguson MC, et al. Characteristics of retracted publications related to pain research: a systematic review. Pain. 2023 Nov;164(11):2397–404.

18. Brown SJ, Bakker CJ, Theis-Mahon NR. Retracted publications in pharmacy systematic reviews. J Med Libr Assoc. 2022 Jan;110(1):47–55.

19. Edalati S, Chung T, Govindaraj M, Kraft D, Lerner DK, Del Signore A, et al. Retractions in Otolaryngology Publications. JAMA Otolaryngol Head Neck Surg. 2025 May;151(5):458–65.

20. Audisio K, Robinson NB, Soletti GJ, Cancelli G, Dimagli A, Spadaccio C, et al. A survey of retractions in the cardiovascular literature. Int J Cardiol. 2022 Feb;349:109–14.

21. Levett JJ, Elkaim LM, Alotaibi NM, Weber MH, Dea N, Abd-El-Barr MM. Publication retraction in spine surgery: a systematic review. Eur Spine J. 2023 Nov;32(11):3704–12.

22. Kocyigit BF, Zhaksylyk A, Akyol A, Yessirkepov M. Characteristics of Retracted Publications From Kazakhstan: An Analysis Using the Retraction Watch Database. J Korean Med Sci. 2023 Nov;38(46):e390.

23. Stavale R, Pupovac V, Ferreira GI, Guilhem DB. Research integrity guidelines in the academic environment: the context of Brazilian institutions with retracted publications in health and life sciences. Front Res Metr Anal. 2022 Oct;7:991836.

24. Kocyigit BF, Akyol A. Analysis of Retracted Publications in The Biomedical Literature from Turkey. J Korean Med Sci. 2022 May;37(18):e142.

25. Shi L, Zhang X, Ma X, Sun X, Li J, He S. Mapping retracted articles and exploring regional differences in China, 2012-2023. PLoS One. 2024 Dec;19(12):e0314622.

26. Sebo P. Chinese authors are overrepresented in medical articles retracted for fake peer review or paper mill. Intern Emerg Med. 2024 Nov;19(8):2369–71.

27. Kocyigit BF, Akyol A, Zhaksylyk A, Seiil B, Yessirkepov M. Analysis of Retracted Publications in Medical Literature Due to Ethical Violations. J Korean Med Sci. 2023 Oct;38(40):e324.

28. Pérez-Neri I, Pineda C, Sandoval H. Threats to scholarly research integrity arising from paper mills: a rapid scoping review. Clin Rheumatol. 2022 Jul;41(7):2241–8.

29. Sebo P, Sebo M. Geographical Disparities in Research Misconduct: Analyzing Retraction Patterns by Country. J Med Internet Res. 2025 Jan;27:e65775.

30. Candal-Pedreira C, Ross JS, Ruano-Ravina A, Egilman DS, Fernández E, Pérez-Ríos M. Retracted papers originating from paper mills: cross sectional study. BMJ. 2022 Nov;379:e071517.

31. Sebo P, Schwarz J, Achtari M, Clair C. Women Are Underrepresented Among Authors of Retracted Publications: Retrospective Study of 134 Medical Journals. J Med Internet Res. 2023 Oct;25:e48529.

32. Decullier E, Maisonneuve H. Retraction according to gender: A descriptive study. Account Res. 2021;1–6.

33. Pinho-Gomes AC, Hockham C, Woodward M. Women’s representation as authors of retracted papers in the biomedical sciences. PLoS One. 2023 May;18(5):e0284403.

34. Decullier E, Huot L, Samson G, Maisonneuve H. Visibility of retractions: a cross-sectional one-year study. BMC Res Notes. 2013 Jun;6(1):238.

35. Schmidt M. An analysis of the validity of retraction annotation in pubmed and the web of science. J Assoc Inf Sci Technol. 2018;69(2):318–28.

36. Sebo P, Sebo M. Comparing the performance of Retraction Watch Database, PubMed, and Web of Science in identifying retracted publications in medicine. Account Res. 2025;33:2484555.

37. Sebo P. Gender disparities among authors of retracted publications in medical journals: A cross-sectional study. PLoS One. 2025 Nov;20(11):e0335059.

38. Hart KL, Perlis RH. Trends in Proportion of Women as Authors of Medical Journal Articles, 2008-2018. JAMA Intern Med. 2019 Sep;179(9):1285–7.

39. Bakker C, Riegelman A. Retracted Publications in Mental Health Literature: Discovery across Bibliographic Platforms. J Libr Sch Commun. 2018;6(1):

40. Bakker CJ, Reardon EE, Brown SJ, Theis-Mahon N, Schroter S, Bouter L, et al. Identification of retracted publications and completeness of retraction notices in public health. J Clin Epidemiol. 2024 Sep;173:111427.

41. Suelzer EM, Deal J, Hanus K, Ruggeri BE, Witkowski E. Challenges in Identifying the Retracted Status of an Article. JAMA Netw Open. 2021 Jun;4(6):e2115648.

42. Schneider J, Lee J, Zheng H, Salami MO. Assessing the agreement in retraction indexing across 4 multidisciplinary sources: Crossref, Retraction Watch, Scopus, and Web of Science, International Conference on Science, Technology and Innovation Indicators; 2023. https://doi.org/

43. Lievore C, Rubbo P, Dos Santos CB, Picinin CT, Pilatti LA. Research ethics: a profile of retractions from world class universities. Scientometrics. 2021;126(8):6871–89.

44. Ribeiro MD, Vasconcelos SM. Retractions covered by Retraction Watch in the 2013–2015 period: prevalence for the most productive countries. Scientometrics. 2018;114(2):719–34.

45. Fiore M, Alfieri A, Pace MC, Simeon V, Chiodini P, Leone S, et al. A scoping review of retracted publications in anesthesiology. Saudi J Anaesth. 2021;15(2):179–88.

46. Nair S, Yean C, Yoo J, Leff J, Delphin E, Adams DC. Reasons for article retraction in anesthesiology: a comprehensive analysis. Can J Anaesth. 2020 Jan;67(1):57–63.

47. Fraud in hundreds of articles n.d. https://revistapesquisa.fapesp.br/en/fraud-in-hundreds-of-articles/ (accessed April 12, 2025).

48. Kharasch ED. Scientific Integrity and Misconduct-Yet Again. Anesthesiology. 2021 Sep;135(3):377–9.

49. Bhaskar SB. Fraud in publications-retractions and deterrents. Indian J Anaesth. 2019 Jul;63(7):585–6.

50. Marcus A. A scientist’s fraudulent studies put patients at risk. Science. 2018 Oct;362(6413):394–394.

51. Carlisle JB. Data fabrication and other reasons for non-random sampling in 5087 randomised, controlled trials in anaesthetic and general medical journals. Anaesthesia. 2017 Aug;72(8):944–52.

52. Hemmings HC Jr, Shafer SL. Further retractions of articles by Joachim Boldt. Br J Anaesth. 2020 Sep;125(3):409–11.

53. McHugh UM, Yentis SM. An analysis of retractions of papers authored by Scott Reuben, Joachim Boldt and Yoshitaka Fujii. Anaesthesia. 2019 Jan;74(1):17–21.

54. Fang FC, Casadevall A. Retracted science and the retraction index. Infect Immun. 2011 Oct;79(10):3855–9.

The supplementary file is available for download at https://doi.org/10.57187/4685.